Generative AI and parliamentary TA – between potential, practice and reflection

Johanna Mehler, Steffen Albrecht, Julia Hahn, Christoph Kehl, Pauline Riousset, Bernd Stegmann, Christine Milchram | March 12, 2026

Promises surrounding AI - and the expectations associated with them - are particularly widespread in business. However, AI is also assumed to play an important role in research. For example, the Royal Swedish Academy of Sciences awarded two Nobel Prizes in 2024 (for physics and chemistry) for the development and application of machine learning methods. In Germany, too, research organizations and scientific academies have individual initiatives and events dedicated to the potential of AI for research. And shortly after the publication of ChatGPT at the end of 2022, the benefits of generative AI in literature research, data processing, scientific programming and scientific communication were highlighted (TAB 2023; Fecher et al. 2023).

On the other hand, there are a large number of risks associated with the use of generative AI in research in particular. For example, due to the black-box nature of AI systems, it is often not transparent and comprehensible how the output is created, and hallucinations of the AI systems and possible bias due to distortions in the training data continue to pose problems. In addition, language models seem to have a strong tendency to generalise (Peters und Chin-Yee 2025) and to confirm the user's view, which often reduces the output quality and thus leads to more hallucinations despite larger models (Zhou et al. 2024). In addition, the use of generative AI raises questions of responsibility and authorship for research content (Mejias 2025). At the same time, there are other dangers of misuse, such as the targeted spread of disinformation (Fecher et al. 2025) or the testing of our cognitive limits when we produce more with the help of LLMs but understand less and less (Messeri und Crockett 2024). Initial experiments show that generative AI can have a negative impact on critical thinking and other essential knowledge work skills (Lee et al. 2025). Last but not least, the application of AI in research often requires the development of software and thus skills that are not sufficiently widespread in the scientific system (Kapoor & Narayanan 2025).

While there seems to be a consensus that generative AI will fundamentally change the scientific process and careful reflection is needed to preserve scientific integrity and competence, it remains to be seen what a balanced and safe integration of AI and human expertise can and should look like. We believe that this development should be proactively (co-)shaped. We welcome initiatives such as the Helmholtz AI Hub and the KI Toolbox of the Karlsruhe Institute of Technology, which allows secure access to various local and external LLMs. And with the TAKI working group (see introduction in the first blog post in this series), we ourselves would like to make a contribution to the responsible use of AI in science, but also in policy advice.

Parliamentary technology assessment operates at the interface between scientific analysis and parliamentary policy advice

Institutions such as the TAB meet scientific quality standards, but have specialised research formats and processes that are geared towards policy advice and from which their own use cases of generative AI arise. Compared to science, we are more focused on science communication and must take into account criteria of political relevance and legitimacy (Jahnel et al. 2025). The potential of large language models has been described as making scientific policy advice more agile and targeted (Tyler et al. 2023). But for which specific work steps is such support suitable? To find out more, the TAKI working group is exploring specific use cases to see how generative AI could be used in areas of work that are particularly relevant to parliamentary policy advice.

In these areas, we are testing various tools for specific tasks, working on establishing standard uses through shared prompts and comparing the performance of local and cloud-based models. Generative AI can also potentially be used for image generation (Jahnel et al. 2025), training and project and process management (De Longueville et al. 2025). Below we provide an insight into two of our pilot projects.

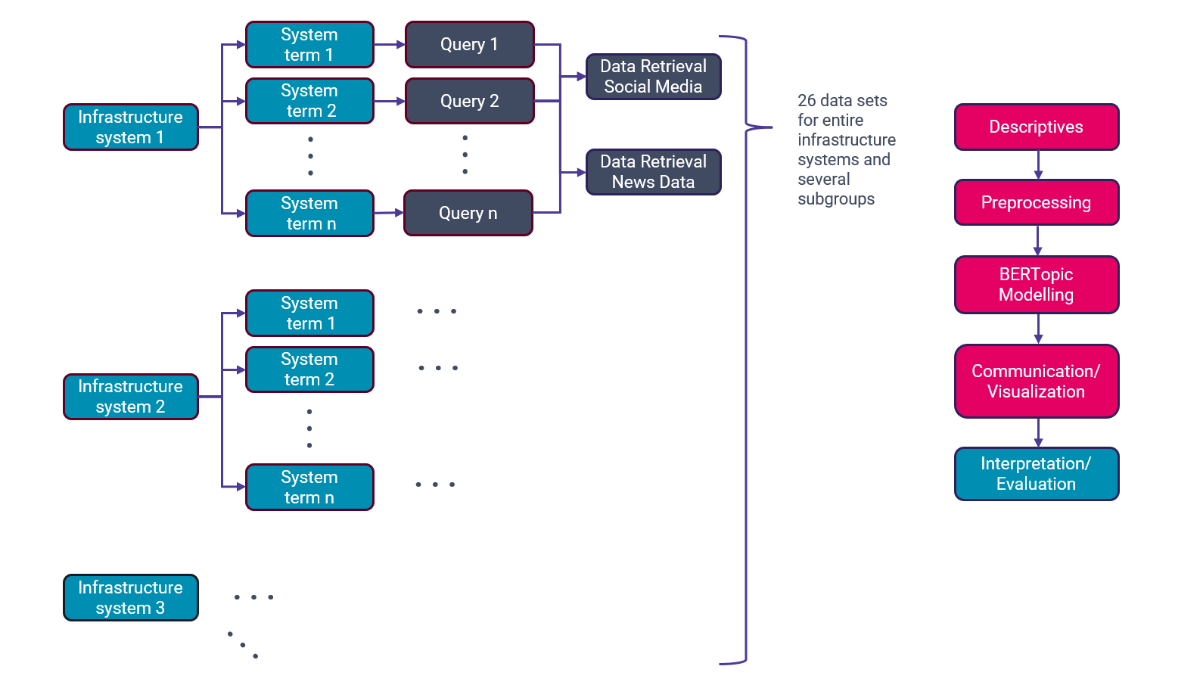

Pilot project 1: Topic modeling for trend analysis of infrastructure systems

Digital methods can be used to analyse large amounts of data and thus identify early signs of potentially significant socio-technological changes and capture social attitudes (Riousset et al. 2024). In parliamentary TA, such applications are used as part of foresight activities (Committee for the Future 2025; POST 2024). Generative AI offers the potential to detect evidence of trends more quickly through automated screening and data extraction (Tyler et al. 2023) and to be able to consider data in very different languages (Berger-Tal et al. 2024). As part of TAB's foresight activities, in addition to the qualitative analysis of trends based on selected documents, we evaluated over 1.2 million posts from social media and newspapers using the machine learning method of topic modeling and summarised the topics identified with the help of generative AI.

On the one hand, our tests confirm known problems such as distortions due to an unbalanced database and the emergence of false results, which require time-consuming validation of the results (Sietsma et al. 2024; Parisi/Sutton 2024; Cheng/Zhang 2025; Wilson 2024). On the other hand, the information obtained in this way has proven useful for validating interim results of the manual search, uncovering gaps and better understanding and correlating developments. We see a need for further development in the search strategy and the quality of the database. Another aim is to make it easier to interpret the topics found and to minimize the overall time required. We will iteratively improve this multi-stage approach over the next few cycles and are excited to see where this journey takes us.

Pilot project 2: Feedback and quality checks with AI support

The second experiment is based on the idea of receiving feedback from AI "colleagues" who are "trained in everything" and never get tired (Jones 2025). Ideally, customised chatbots enable researchers to integrate additional perspectives into their texts (Jones 2025, Naddaf 2025). However, previous experience has shown that the results are often not reliable and reproducible, but depend on the training data and the stochastic analytical decisions of the models (Jones 2025, Xames 2025, Naddaf 2025, Ye et al. 2024). In our experiment, we created three chatbot personas to review texts with regard to various quality criteria of policy advice: a scientific advisory board, a policy advisor and a "societal sounding board", i.e. a diverse body of representatives from society whose task is to formulate feedback on the inclusivity, accessibility and social resonance of the texts presented.

In October 2025, we took a critical look at the three chatbots together with EPTA practitioners at a meeting in Bern. By applying the chatbots to an EPTA report, the strengths and weaknesses of our virtual "colleagues" became visible and were discussed controversially. The chatbots were convincing in identifying redundant sections and statements that corresponded more to hype than actual events. The tips on language and wording were also perceived as helpful, as were tips on possible biases and areas for improvement to facilitate reading comprehension.

However, the information provided by the chatbots also proved to be incomplete and sometimes went beyond what was asked for, for example when political recommendations were uexpectedly included. The tendency described in the first section to confirm users in their point of view also led to exaggerated emphasis on successful content. The use of such chatbots was therefore discussed controversially among EPTA colleagues. Some felt that chatbots cost time rather than save it, while others appreciated the additional information they provide. Regardless of this, participants saw potential in the further development of better defined prompts and roles that more accurately reflect the actual work processes in policy advice. A promising approach could also be to have not just one chatbot, but an entire sounding board of different bots provide feedback - in the hope of gaining a little more insight into the argumentation and control over the weighting of the perspectives and thus improving the usefulness of the chatbots. This experiment also calls for a repeat.

Changes to working practices through generative AI are reflected upon

In addition to reflecting on the specific opportunities and challenges, the question also arises as to how knowledge generation processes in TA change through the use of generative AI. The ITAS colleagues also involved in the working group are therefore conducting short interviews with TAB employees in order to explore which tools are used in which contexts, how they are experimented with and how knowledge production is changing. The first four interviews provide a kind of snapshot that can be summarised as follows: The overall picture is that there is an assessment of a certain acceleration in knowledge production, although much is still in "testing phases". In addition, there is the perception that more text material is produced through generative AI, which in turn makes the use of these tools necessary. There is a certain tension here between the efficiency gains from the tools and the potential dependency that comes with them. There is also a possible shift in use. The previous focus on writing texts with the help of generative AI could change: Data selection and maintenance as well as quality control are moving more to the fore. It is clear that such monitoring and reflection on work with generative AI is extremely important in order to recognize added value and opportunities for parliamentary TA and to continuously keep an eye on the limits of AI use.

Our new working reality - an interim conclusion

With new AI tools in the cooking pot of digital technology assessment methods, the proof of the pudding is in the eating. In some areas, the use of generative AI delivers results that encourage its further use. With its help, larger amounts of data can be processed, such as in the research for the TAB's foresight reports. Thanks to generative AI and other machine learning techniques, future analyses have a more quantitative foundation. Generative AI can also serve as a sparring partner in the preparation of TAB reports and expand the desired diversity of perspectives. A close connection to the individual work processes has proven to be important here. On the one hand, because the way in which AI is used depends heavily on subjective procedures and preferences, and on the other, in order to retain human oversight over the results. Such oversight is always necessary and, in our experience, requires not only technical expertise but also a lot of time. So far, the use of AI for more complex applications has therefore not resulted in any significant time savings overall. However, this is certainly the case for smaller, repetitive tasks, such as AI-based translations or image descriptions, which we also used for this article. It is also true when generative AI is used for intermediate steps in more comprehensive studies, such as trend analysis. Further experiments are needed to identify those cases in which the use of AI offers advantages in view of the variety of possible applications and the promises that it facilitates work. Reflective monitoring is also necessary in order to identify side effects that may not be obvious, but which run counter to the objectives of parliamentary TA.

We would like to engage in an exchang about working with generative AI. Please feel free to share your experiences or questions with us – they will enrich the discourse and feed into the next articles in the "AI and parliamentary TA" series.

Contact: info∂tab-beim-bundestag.de

Updates from our projects and observations and analyses on socially relevant issues of scientific and technological change.

Discover